How AI Is Transforming Risk Management, Audit, and Cybersecurity: A Guide for Technology and Business Professionals

Artificial intelligence is reshaping the way organisations identify, assess, and respond to risk. Whether the risk in question is financial, operational, reputational, or cyber in nature, AI is providing capabilities that were simply not available to risk and security professionals even five years ago. The result is faster detection, more accurate assessment, better-informed decision-making, and — when AI is deployed thoughtfully — meaningfully stronger protection against the threats that organisations face.

In this article we explore two of the most important and closely related applications of AI in enterprise risk management: AI in risk and audit functions, and AI in cybersecurity. Both are areas of rapid development, both carry significant implications for how organisations are governed and protected, and both require professionals to develop genuine AI literacy to navigate effectively.

AI in Risk Management and Audit: From Sampling to Comprehensive Analysis

Risk management and internal audit have historically been constrained by a fundamental limitation: the volume of data involved in assessing organisational risk far exceeds what human teams can analyse comprehensively. Traditional audit approaches rely on sampling — reviewing a representative subset of transactions, controls, or records and drawing inferences about the whole. Traditional risk frameworks aggregate risk assessments from across the organisation through periodic processes that produce a snapshot of risk at a point in time rather than a continuously updated picture.

AI is changing both of these realities. Machine learning systems can analyse entire transaction populations rather than samples, identify anomalies and patterns that sampling would miss, and update risk assessments in near real time rather than quarterly or annually. The implications for audit quality, risk detection, and organisational governance are substantial.

AI-Powered Transaction Monitoring and Anomaly Detection

One of the most mature and widely deployed applications of AI in risk and audit is transaction monitoring. AI models trained on historical transaction data can identify patterns that deviate from expected behaviour — unusual amounts, unexpected counterparties, transactions at atypical times, sequences of transactions that individually appear normal but collectively suggest manipulation. Financial institutions have deployed these systems extensively for fraud detection and anti-money-laundering compliance, but the same principles apply across any organisation managing significant transaction volumes.

The advantage of AI anomaly detection over rule-based systems is its ability to identify novel patterns rather than only those that have been explicitly anticipated. A rule-based system flags what it has been told to look for. An AI system learns what normal looks like and flags deviations — catching new types of fraud, manipulation, or error that no rule has been written for yet.

Credit Risk and Loan Assessment

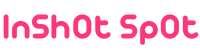

AI is transforming credit risk assessment by enabling models that incorporate far more variables than traditional scorecards, update dynamically as new information becomes available, and identify complex non-linear relationships between risk factors that conventional statistical models cannot capture. AI credit models can process cash flow patterns, payment behaviour, market conditions, sector-specific risk indicators, and alternative data sources simultaneously — producing more accurate risk assessments that benefit both lenders and borrowers.

For internal audit teams, AI-powered credit risk review tools can assess entire loan portfolios for emerging risk concentrations, covenant breaches, or deteriorating credit quality — work that previously required large teams and significant time. The ability to monitor portfolio risk continuously rather than through periodic reviews enables earlier intervention and better risk outcomes.

Continuous Controls Monitoring

Traditional internal audit operates on a cyclical basis — each area of the business is audited periodically, with the frequency determined by its assessed risk level. AI enables a fundamentally different model: continuous controls monitoring, in which AI systems test key controls against actual transaction data on an ongoing basis, flagging control failures or weaknesses in real time rather than waiting for the next audit cycle.

This shift from periodic to continuous assurance changes the nature of internal audit’s contribution to governance. Rather than providing historical assurance — confirming that controls were operating effectively at some point in the past — continuous monitoring provides current assurance, enabling management and audit committees to have confidence in the current state of controls rather than relying on information that may be months old.

Predictive Risk Modelling

AI is enabling risk functions to move from reactive to predictive postures. Machine learning models can identify the leading indicators of risk events — operational failures, credit deterioration, compliance breaches, fraud — and flag emerging risks before they materialise as losses. This predictive capability is particularly valuable in complex, fast-moving risk environments where the traditional approach of reacting to events after they occur is simply not adequate.

Risk professionals seeking a comprehensive understanding of how AI is reshaping risk management and internal audit — including the specific tools being deployed, the governance implications, and the skills required to work effectively in an AI-augmented risk function — will find the AI Awareness guide to AI in risk and audit an authoritative and practical resource covering the full landscape of AI’s impact on these critical functions.

AI in Cybersecurity: Defending at Machine Speed

Cybersecurity represents perhaps the most compelling case for AI in any enterprise function. The threat landscape has become so vast, so dynamic, and so sophisticated that human-only defence is simply not adequate. Attackers are deploying automation and increasingly AI themselves — and defenders who rely purely on human analysis and rule-based tools are operating at a fundamental speed disadvantage. AI is not optional in modern cybersecurity; it is essential.

Threat Detection and Response

The core challenge in cybersecurity is identifying malicious activity among the enormous volume of legitimate activity occurring across an organisation’s systems every second. A large enterprise may generate billions of security events daily — far more than any human team could review. AI-powered security information and event management systems can process this volume of data in real time, correlating signals across disparate systems, identifying patterns that indicate attack activity, and prioritising alerts based on assessed severity and confidence.

Modern AI-driven threat detection systems go beyond signature-based detection — which can only identify known malware and attack patterns — to behavioural analysis that identifies novel threats based on what systems and users are doing rather than matching against a database of known bad actors. This is critical in an environment where attackers regularly modify their tools and techniques specifically to evade signature-based detection.

Security Operations Centre Augmentation

Security operations centres are under intense pressure — high alert volumes, significant false positive rates, skills shortages, and the relentless pace of the threat environment create conditions where analyst burnout is common and important signals are regularly missed. AI is transforming SOC operations by automating the triage and investigation of routine alerts, enriching alerts with relevant threat intelligence automatically, and enabling analysts to focus their attention on the genuinely complex and novel threats that require human judgement.

AI-powered automated investigation tools can follow the same investigative steps a skilled analyst would — gathering evidence, checking threat intelligence, assessing scope and severity — in minutes rather than hours, and present analysts with a complete picture of an incident rather than a raw alert requiring them to start from scratch. The productivity gains are substantial, and the improvement in detection quality — fewer missed incidents, faster response times — is measurable.

Vulnerability Management and Attack Surface Analysis

Understanding an organisation’s attack surface — all the systems, applications, and interfaces that could potentially be exploited — has become extraordinarily complex in modern cloud and hybrid environments. AI-powered attack surface management tools can continuously discover and catalogue assets, identify vulnerabilities, assess their exploitability in the context of current threat intelligence, and prioritise remediation based on actual risk rather than raw severity scores.

This risk-based prioritisation is critical because the volume of vulnerabilities in any large environment far exceeds the capacity of security teams to remediate them all quickly. AI systems that can accurately identify which vulnerabilities represent the highest actual risk — taking into account exploitability, asset criticality, existing controls, and active threat intelligence — enable teams to direct their remediation effort where it will have the greatest impact on organisational security.

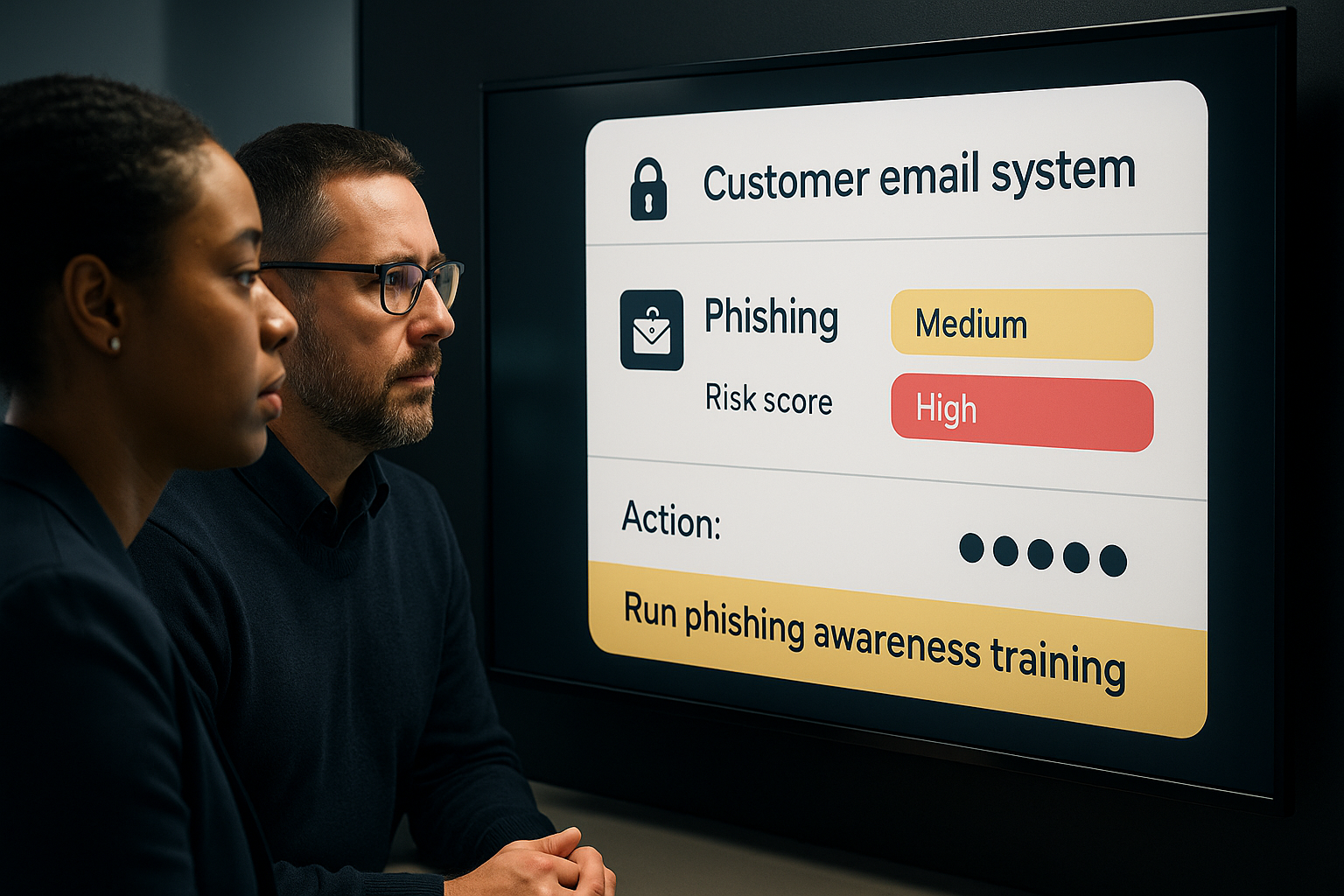

Phishing and Social Engineering Defence

Phishing remains one of the most effective attack vectors, and AI is being applied on both sides of this battle. Attackers are using AI to generate more convincing, personalised phishing content at scale. Defenders are using AI to detect phishing attempts — analysing email content, sender behaviour, URL patterns, and contextual signals to identify malicious messages that evade traditional filters.

AI-powered security awareness training platforms are also changing how organisations build human resilience against social engineering — using adaptive learning to target training to individual risk profiles, simulating realistic attacks to test and build employee awareness, and providing in-the-moment coaching when employees encounter suspicious content.

The Cyber Threat and Business Impact Balance

One of the most important contributions AI is making to cybersecurity is helping organisations move beyond purely technical risk assessment to a business-impact framing. AI systems can model the potential business impact of different cyber scenarios — quantifying the financial, operational, and reputational consequences of different attack types — enabling organisations to make risk-based investment decisions rather than purely compliance-driven ones. This alignment between cybersecurity risk and business risk is a significant maturity step for many organisations, and AI is making it practically achievable.

For technology professionals and business leaders seeking to understand the full scope of AI’s role in modern cybersecurity — from threat detection and SOC operations to AI-powered attack techniques and the governance of AI security tools — the AI Awareness guide to AI in cybersecurity provides comprehensive, current coverage of the field and its implications for organisations of all sizes.

The Connection Between Risk, Audit, and Cybersecurity in an AI-Driven World

Risk management, internal audit, and cybersecurity are more interconnected in an AI-driven world than they have ever been. Cyber risk is now one of the top operational risks for most organisations — and managing it effectively requires the kind of continuous monitoring, real-time data analysis, and predictive capability that AI provides. Internal audit functions need to develop the capability to audit AI systems themselves — assessing whether AI models are accurate, fair, well-governed, and operating as intended. And risk functions need to incorporate AI-specific risks — model risk, data bias, AI system failure, adversarial attacks — into their frameworks alongside traditional risk categories.

The professionals who will lead in these fields over the coming decade are those who combine deep domain expertise with genuine AI literacy — who understand not just how to use AI tools, but how those tools work, where they are reliable and where they are not, and how to govern them responsibly. Building that understanding is not a luxury for technology specialists; it is a core professional requirement for anyone working in risk, audit, or security in an AI-shaped world.

The transformation of these functions by AI is already well advanced — and the pace of change will only accelerate. The organisations and individuals investing now in building real AI capability across risk, audit, and cybersecurity will be substantially better positioned for what comes next.